You have heard of bacon. It is usually made of side pork (Americans call it pork belly). You have heard of back bacon (Americans call it Canadian bacon). Have you heard of Buckboard Bacon. Bacon is quite fatty. Back bacon is quite lean. Buckboard is kind of in between.

Bacon is made of pork belly which is heavily streaked with fat. Back bacon is made from pork loin, which is quite lean. Buckboard Bacon is made from pork shoulder or butt roast (which is also part of the shoulder). This has large pieces of lean meat with wide streaks of fat.

The last time my brother came to visit, he brought some wonderful cuts of beef I can’t get easily here. I am going to visit him so I wanted to do something in way of thanks. I thought brining him something he may not have tried before was a good idea so I made some Buckboard Bacon for him.

I will start with some basics of making bacon. You have to use curing salts to make bacon. Curing salts are a mixture of salt and sodium nitrite.

The curing salts inhibit bacterial growth during the long smoking process. If you cold smoke meat for hours without curing, it will go bad.

The curing salts also give bacon its distinctive colour and taste.

Curing salts go by many names, Instacure #1, Prague Powder #1, Pink Salt and many more. Just make sure it is 6.25% sodium nitrite and the rest is salt.

The problem is that too little curing salt will not protect the meat and too much can make you sick. It is imperative you use the right amount.

There are two ways of introducing curing salts. One is a dry rub and the other is a brine where they are mixed with water. I prefer the dry rub for bacon and the brine for hams. I will be using the dry rub here.

When you are using a dry rub, you must have the exact amount of curing salts for each piece of meat. This means that, if you have more than one piece, you must do each piece separately so that you can make sure you have the right amount of curing salts.

I would like to strongly recommend you get a small scale to measure the curing salts by weight. It is just more accurate and you will get a better result.

Not all bacon is smoked. Several European countries have a tradition of making bacon without smoking. So, if you don’t have a smoker, you could still make this bacon by not going through the smoking process. After rinsing the cure off the bacon and soaking it, just put it in a 180 F oven until the internal temperature is 140 F. It is delicious but quite different without the smoky taste.

Also, if you don’t have a cold smoke generator, you can skip the cold smoke and just hot smoke it in a smoker. It will be delicious but will have less smoke taste.

Let’s get to it.

I started with a large boneless butt roast. You can buy one bone in and debone it if you like. The boneless butt was just on sale.

I untied the roast and laid it open. You want to cut off any really thick pieces of fat and try and cut the roast into pieces that are between 2 and 2 1/2 inches thick. The width and length doesn’t matter. Save any trimmings for making sausage.

I was able to get 4 pieces from the roast I got. Normally I like them wider than I cut these. However, I suspect the butcher who deboned this roast and rolled it had been enjoying recreational pharmaceuticals. It was pretty hacked up. I had to cut out some loose pieces.

As I had 4 pieces and my brother had been so generous. I decided to do them 4 different ways to give him a variety. I will start with basic bacon and then show you how to do the variations.

You need to start by weighing the piece of meat you will be curing. The piece I chose for a basic cure weighed 649 grams.

For each kilogram of meat, the basic bacon cure is:

- 3 grams (2 ml) Prague Powder #1

- 40 ml brown sugar

- 15 ml kosher salt

If you are one of my metrically challenged readers, use the following amounts per pound of pork loin. The ounces refer to weight.

- 0.05 ounce (1/5 teaspoon) Prague Powder #1

- 4 teaspoons brown sugar

- 1 1/2 teaspoon kosher salt

Just multiply these amounts by the number of kilograms (or pounds) in the piece of meat. So, I multiplied by 0.649 and made a cure of:

- 2 grams Prague Powder #1

- 25 ml brown sugar

- 10 ml kosher salt

This is not a time to estimate amounts. Be careful and measure accurately.

Mix the cure ingredients together.

Put the meat on a tray or plate. Rub the mixture into all sides. Put the meat and any curing mix that falls onto the plate into a large resealable bag. It is important you get as much of the curing mix as possible in the bag.

The bacon needs to sit in the fridge to cure. The length of time it cures depends on the thickness of the thickest part of the meat. For every inch of meat, cure for 4 days and then add 2 days. The thickest part of the meat was 1 1/2 inches. This means the meat has to cure for 8 days (4 times 1 1/2 equals 6 plus 2 equals 8).

I left the meat in the fridge for 8 days turning it and rubbing the liquid that forms into the meat every day or so.

I took the meat out of the bag and rinsed it off under running water. Then I soaked it in cold water for 40 minutes, changing the water once.

The smoke will give a better taste if the surface of the bacon is dry. I put the meat on a rack and dried it with a paper towel. I let is sit for 15 minutes and dried it with a paper towel. I continued this until the meat stayed dry after sitting for a 15 minute period. It took about an hour in total.

I did not turn my Louisiana Grills Pellet Smoker on. I put my A-Maze-N tube smoker in with hickory pellets and lit it. I put the bacon in and let it cold smoke for 6 hours. Then I put it in the fridge, uncovered overnight.

If you are not set up for cold smoking, you can skip this step and just hot smoke it.

I fired the Louisiana Grills smoker up to 180 F and put the meat on a rack over a tray and into the pellet smoker. I smoked to an internal temperature of 140 F.

I covered the meat and put it in the fridge for 2 days to let the flavours blend.

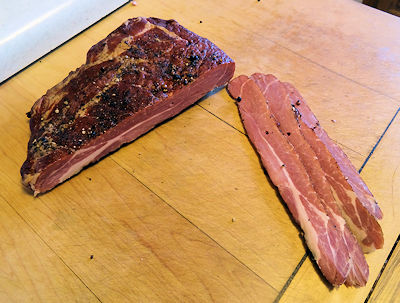

I sliced the meat up and wrapped it for freezing.

Following are the 3 variations I did. Other than the noted changes, the other 3 pieces were processed in the same method.

Berbere Buckboard Bacon

I added 1.5 ml Berbere spices per kilogram of meat to the curing mix.

Maple Bacon

I didn’t add the brown sugar to the cure. I took the same amount of maple syrup and injected it into the piece of meat prior to rubbing the other curing ingredients into the bacon.

Pepper Bacon

I rubbed 10 ml coarse ground per 1 kilogram of meat onto the surface of the piece of meat after rinsing and soaking.

Of course, I had to try the bacon, just for quality control purposes. I was giving it to a great brother. I fried some of each up and had the samples with home fries for breakfast.

The Verdict

I love buckboard bacon. It is coarser than back bacon and leaner than bacon. I love the chew and the fat level. If you haven’t tried it, you are missing something.

As for the variation, the berbere spice gives just a touch of heat. No burn, just a nice warmth.

The maple syrup adds a different sweetness. It will not taste like the commercial maple bacons. They inject flavourings. This just has a kiss of maple that is a nice note.

The pepper adds a touch of spice that mostly comes as an after note.

I love them all. I would say the berbere is my favourite but they all are great and you must have variety in your life!

The Old Fat Guy

Ingredients

- 1 kg pork butt roast trimmed between 1 1/2 to 2 1/2 inches thick

- 3 grams Prague powder #1

- 40 ml brown sugar

- 15 ml kosher salt

Instructions

- Mix Prague powder, brown sugar and salt together.

- Put the pork on a plate and rub the cure mixture into all surfaces.

- Put the pork in a resealable bag and get as much of the curing mixture that fell off onto the plate into the bag.

- Put the bag in the fridge for 4 days for every inch of the thickest part and an additional two days (ie 2 inches would be 10 days)

- Turn the meat and rub any liquid in the bag in every day or so.

- Take the meat out and rinse it under cold water.

- Soak the meat in cold water for 40 minutes, changing the water once.

- Put the meat on a rack and dry it with a paper towel. Let it sit for 15 minutes and dry with a paper towel again.

- Continue drying until the surface feels dry and tacky, about 1 hour.

- Cold smoke the bacon for 6 hours with hickory smoke.

- Let the bacon sit in the fridge uncovered overnight.

- Hot smoke the bacon at 180 F to an internal temperature of 140 F.

- Let the bacon sit in the fridge, covered, for 2 days.

- Slice and serve or freeze.

40 Responses

I had never heard of buckboard bacon, but it looks delicious!

I really like it. It is a bit tougher than Canadian bacon but has a great flavour!

I have never heard of buckboard bacon. I think it looks delicious.

It is nice and we do enjoy it.

Finally a basic cure recipe!!! Thanks…but kind of a stupid question here…I Have 2 pieces of meat….one is 3.3 pounds and one is 4.2…..I made two separate batches…but because I lost my focus I swapped out the mixes….meaning one didn’t get enough and one got too much….can I still save it or combine the two pieces into one bigger bag?

It is not optimal but I would put both pieces into one bigger bag or one will be under cured and the other overcured. Take extra care to manipulate the bag every day to get the cures rubbed into all surfaces of the meat. Fortunately, the sizes aren’t that different and all should be well.

Dave, thanks for the input….I guess I forgot one point…I did catch myself before I applied the larger amount to the smaller piece. So…what I did was just took a bit from that dry rub batch and applied to the larger piece. (I think the pink salt different was around .05 grams.) but I do feel better from your feedback. I will combine them and pay attention to manipulate them daily. Moreover…I will do a better job at staying focused! Thanks…

No problem! I think you are really going to like it. I love buckboard bacon!

Hey Dave thanks for the video. I found it through Reddit as someone had posted it in the BBQ group. I have brined my pork butt and am planning on smoking it this weekend. I just have a few quick questions. Do you prefer slicing then freezing the bacon, or do you ever freeze larger pieces that you thaw and then slice when you go to use it. I’m just curious if one method is better for some reason. Also just curious if you are still a fan of cooking to 140 as per the recipe above. There is lots of variation in the online literature.

Again thanks for the recipe and vid, just letting you know there are people who appreciate your work.

Kevin

Hi, Kevin. Thanks for the kind words.

I slice the bacon first and then package it. The reason is that there is just the two of us and small packs of sliced bacon are convenient to take out of the freezer. It is really up to you. If you have a larger family or like slicing just before cooking, there is no problem with freezing it in chunks and slicing after thawing. It is really just personal preference.

As to cooking to 140 F, there are 3 choices of smoking methods and all work well and have their advantages and disadvantages.

The first choice is to just cold smoke without heat at all. You smoke for as long as you want depending on personal tastes. If you like a light smoke, 4 hours is fine. Others go overnight and some real smoke hounds do over a day. I suggest you try the lower end and increase to your tastes. The advantage to this is you are cooking from raw. Some say it gives the bacon better texture (I think any improvement is marginal). The disadvantage is the bacon is softer and harder to slice.

The second choice is to hot smoke to an internal temperature of between 130 and 140 F. The advantage is that the bacon will be partially cooked and a little firmer for slicing. The disadvantage is the suggested loss in texture. Also, you can not expose the bacon to smoke for as long a period as you can with cold smoking if you want a very strong smoke taste.

The third choice is to smoke to an internal temperature of 155 to 160 F. The advantage to this is that the bacon is fully cooked and can be eaten cold in a sandwich without further cooking. Also, it is easy to slice. The disadvantage is more of a loss of texture.

You can combine the cold smoke with a hot smoke for double smoked bacon. Give it a cold smoke for about four hours, let it sit overnight and hot smoke to temperature. This gives a strong smoke flavour with the firmer easier to slice bacon.

I have tried all these methods and they all turned out great. It is really a matter of personal preference. Some will tell you the only way to smoke bacon is one of the three but, trust me, they all give a good result. Personally, I prefer double smoked but She Who Must Be Obeyed likes a lighter smoke hit so I smoke to 140 F quite often.

My best advice, try one method and then another. Keep notes and decide which you like best.

Wow. Great info and very much appreciated. This is my first batch of bacon, and I have already learned that the most difficult part of the process is having the patience to complete all of the steps. Thanks again for your input.

I should warn you about making bacon. It is addictive. Once people try it, they can’t stop. There are no 10 step programs or help. You will be doomed to making great bacon. Just thought you should know.

Very addictive. I’m just smoked my second 10lb batch in a month. (Bacon makes great gifts for friends and family). My question is about the dry cure ratios. In your video the ratio of salt to sugar is 1 to 1. In the recipe above it uses 40ml of sugar to 15ml of salt. Is this strictly a flavor preference?

Thanks again for all the help.

In the video I am making Maple Buckboard Bacon (see the post https://oldfatguy.ca/?p=5530) and I inject the pork with maple syrup which has a lot of sweetness. This post is for regular buckboard bacon which doesn’t have any maple syrup added so more sugar is needed in the cure.

Can you please give me the weight of the salt and sugar in grams? Thank you!

Brown sugar weighs 0.93 grams per ml so 40 ml is 37.2 grams. Kosher salt is 1.3 grams per ml so 15 ml is 20 grams. The exact amount of brown sugar and salt isn’t as important as the exact amount of curing salts.

A good rule of thumb for bacon making is to salt to 2% – 2.5% of the total weight of the meat. So, 20 – 25g per kilo. Adjust up or down depending on taste/preference.

Great starter recipe David – this is the first one I did, and has formed the baseline for all my tweaking from that point forward.

Thank, Paul.

Does it matter which direction it’s sliced? With or against the grain that is?

It is better against the grain but that can be difficult with pork butt or shoulder as the muscles run in different directions. Just cut as much as possible against the grain.

Not sure if you are still monitoring this post, but do I have to use any sugar? I don’t typically eat sugar/carbs and would love to try this.

It really does need a touch of sweet. You can cut the sugar back to about 1/2 of what I use (I like the sweet). You could also try stevia or one of the other super sweeteners but I haven’t tried that myself. If you wanted to try it without the sugar at all. It will cure and give the salted pork taste and a touch of smoke but I suspect it might be a little bland.

Thanks for your reply. I’ll give it a shot with an erythritol/monk fruit mix. Again, thank you!

I’d love to hear how it turns out!

Just to chime in ? I also follow keto/low carb and I have cured belly bacon and buckboard bacon with several different sweeteners at different times. Those included: a very small amount of regular sugar. The ratio of meat to sugar in the dry rub adds minimal carbs. The same for coconut sugar; or even blackstrap molasses that I sometimes add to the meat 2-3 days before it is due to come out. I think because the bacon is heavily rinsed and then soaked, it leeches some of the sugar as well as the salt. It is minimally sweet but enough to add a nice flavor.

Thanks for the info!

Hi there,

Yesterday i cured my first batch of pork butts. I bought 2 from Save On Foods, and had about 3 x 1KG pieces and a smaller 600gram piece. I followed your Youtube video instructions, but as i had no injector i left out the Maple Syrup. I read about another person had same question about quantities. So i used 3grams pink salt with a 15ml sugar and salt. Im concerned about my meat now. Will it be safe? Will it taste too salty? Any help would be great please 🙂

If you used 3 grams of pink salt #1, 15 ml kosher salt and 15 ml brown sugar per kg of pork, you will be fine. I like my bacon sweeter so I would use 25 ml brown sugar per kg if I wasn’t using maple syrup. However, many don’t like their bacon as sweet and use 15 ml. In short, it will be fine if you used 3 ml pink salt, 15 ml kosher salt and 15 ml sugar per kg of pork. So for the 600 gram piece, you would have used 1.8 ml pink salt, 9 ml kosher salt and 9 ml brown sugar.

The measure that is critical is the pink salt #1. You can increase and decrease the sugar and salt to your tastes as long as you use at least 10 ml of kosher salt per kg as it is needed to pull the moisture out.

Let me know if any of this isn’t clear or I can help further.

also…..

I just check the pork butts, as i wanted to check the thickness i had (2.5 – 3.0 inches thick). I have noticed that there is hardly any water/moisture drawn from the pieces of meat. Should i need to worry about this? Kinda looks to me like all the cure has dissolved and nothing is happening. I only cured them yesterday afternoon so maybe need to give it more time?

Cheers again.

The amount of moisture given off varies widely depending on the way the meat was processed and stored. Don’t worry about it. As long as the cure is coming into contact with the meat, all is well.

Thanks for your quick reply sir!

Yes for the 1kg pieces i used:

3 grams pink salt

15ml of salt

15ml sugar (organic golden sugar…just what i had in house)

for the 600g piece:

1.5 grams pink salt

8ml salt

8ml sugar

I will eat this piece first so figure i should be ok?

The meat just now looks like its in almost a natural state, like it never had cure rubbed in to it,so i guess that why im kid of concerned along with lack of liquid.

The meat was from Save On Foods, seems like a decent cut to me.

Thanks once again David

Nick

Sounds fine. All should be well! Once you cook it, you can adjust for more or less salt, sugar, etc to your own tastes. It is the best part of making your own!

So today is day 4 of the cure, i will check it tonight when home to see if more moisture has been driven out of the mat. Question for you, when i cured bacon (loin) in the past, i used just sugar/salt of equal parts, rubbed on daily to the meat. Why is this is not required to apply more salt to the meat? Im obviously not talking about pink salt. Just regular salt.

Cheers once again!

The reason you let meat sit for a number of days is that the salt/sugar/curing salt only go into the meat to a depth of 1/4 inch a day. If you put more salt on 2 days before it is finished, it will only go 1/2 inch into the meat. The whole idea of dry curing is that a measured amount of dry cure mix works its way into the meat and gradually develops on equilibrium in all parts of the meat. If the proportions are correct, there is no need to work any more in. As a matter of fact, any added later would disturb the equilibrium.

Just wanted to follow up! This worked out beautifully 🙂

Couple of observations from me, that might help others

1. Hardly any liquid from the curing, noticed after about 7 days the color of the meat inside was a rich pink color as opposed to the grey-er on the outside

2. Did not use enough sugar! i did not account for the maple syrup which i did not use.

3. Rinsed off salt and let meat soak for 1hr, changed water at 30 mins. Next time i will soak for just 30 mins as i feel the end product could be a little saltier for my pallet.

4. Smoking – Done on a Weber gas grill 3 burner. Indirect cooking with the far left on very low and got grill to 180. Used the Amazing smoke tube. Wow! This creates A LOT of smoke! lasted for about 2hrs. Did not refill once it went out. Will refill next time just to get as much smoke flavor as possible. Keep the grill at 180 was a pain in the ass…..any tips welcomed!

5. Cook – Meat chunks were about 2.5 to 3 inches thick. The cook was done in less time that i expected. I think over all it was about 2.5hrs!! the internal temp was 140 when i removed so i assumed it was ok. Maybe this was due to the conditions as was quite warm outside when i did the cook?

6. Tasting – Sensational! I wrapped it in wrap for 2 days, then sliced and ate. Was not as salty as i would like and lacked the sweetness which i knew already.

7. Next time – Yes i will be doing this now all the time! Im going to try some more Buck Board and will tray belly and back. Also will add some flavors like cracked pepper on outside.

All: Really dont need to go buy a 600 dollar smoker! i spent 25 bucks on a smoke tube that works like a dream.

David: Thanks mate! I bought your book and sending it home to my dad for his fathers day gift!

Thanks for the feedback. Some responses.

Sugar is a matter of personal taste but not enough and it is a little bland.

The rinsing does not significantly increase the saltiness of your bacon. It just removes surface salt. If you want more salty flavour, increase the Kosher salt in you dry cure but be careful, a little more salt makes it a lot more salty.

If you want to smoke your bacon in a gas grill. Don’t turn the gas grill on at all. Just light up the tube smoker and put it in the grill with the bacon and cold smoke to the desired level. I would start at 4 hours but I have friends who go up to 48 hours! The problem is too much smoke is unrecoverable so increase the time in small increments until you get to where you want. Then you have two choices. You can leave it like this, slice and cook. However, with no heat, the bacon is harder to slice. If you want to make it easy to slice, put it in a 180 F oven to an internal temperature of 140 F or 155 F if you want it fully cooked so you can eat it cold. The cold smoke gives the smoke flavour and the oven is easy to control to get the doneness you like.

There are a world of flavours and styles. A buddy just gave me some hog cheek bacon! To die for.

Thanks so much for buying the book. I am just about finished a second book aimed at more experienced smokers with recipes for smoked cheese, bacon, ham, cured sausage, corned beef, pastrami and different beef, chicken, pork, seafood and sides recipes. I should finish soon but the publishing process seems to take about a year. Sigh.

New Update:

3 days ago i started curing my second batch of bacon. This time i bought free from pork shoulders about 8kgs in all (better quality meat). The first time round i bought 4kgs, and when it tastes this good…..it doesn’t last long let me tell ya!

I procured a vacuum pack this time round to make things more simple. So i cured the meat, this time with more sugar as i screwed measuring last time (was working from the maple bacon recipe and just omitted the maple). I added roughly 2g extra of Kosher salt (not pink salt), as last time it was not quite a salty as i would have liked.

So after even just one day i noticed that the liquid drawn from the pork was as i would have expected the first time round. The pork is already turning that nice pinkish red. I have a feeling this is gonna be some good bacon!

Im going to leave 12 days, thats what i did last time. Then am going to do as you suggested and cold smoke for 4-6 hrs on my gas grill without any heat, only the A-Maze-N Smoker going. Then i will leave in fridge for 1-2 days before slow cooking to internal temp of 135-140.

Questions:

1. Rather than stink out house i was going to use the gas grill to heat up to 135. Do you see any issues with doing so?

2. When cold smoking outside, do i have to watch out for anything such as the meat going off? I assume the smoke will keep bugs and critters away? and the bears 🙂

3. The only reason we bring up to 135-140 is to make it easier to slice?

Cheers once again!

Using more salt will draw out more liquid.

12 days will be fine unless you have really thick slabs.

No problem using the gas grill as long as you keep the bacon away from direct heat and don’t let the temperature in the grill get over 200F. However, if the bacon gets exposed to direct heat, it will cook and the texture won’t be as good. Also, your grill won’t add any smoke flavour unless you run the AMZN while you are cooking it.

You don’t have to worry about the bacon going bad during the cold smoke. That is why you cure it. The nitrites inhibit bacterial growth. As for bears and insects, the smoke should deter them.

Yes. The main reason I cook to 135 is to make it easier to slice. However, as I slow cook it in my smoker, it does add smoke flavour. I actually find the texture of just cold smoked slightly superior but it is such a pain to slice with my POS slicer. However, I frequently do some back (Canadian) bacon to 155 F because I have friends who like to eat it cold.

I’m interested in making the buckboard bacon. I see that you use kosher salt. Please tell me which brand you use since the sodium content in these two products varies greatly.

Diamond Crystal Kosher Salt has 280mg Sodium per 1/4 tsp. Morton Kosher Salt has 480mg Sodium per 1/4 tsp.

I used Diamond Crystal but I’m afraid it will not be salty enough if your recipe used the Morton brand.

Also, would the difference in sodium content affect the curing process?

Your response is greatly appreciated!

I used Morton. The exact amount of salt isn’t critical, just a matter of taste as long as you have enough to draw out some liquid in the curing process. I have used as little as 8 ml per KG trying to reduce sodium content. It cured fine, I just like it saltier.